On-premises data storage offers compelling advantages for industrial sectors grappling with massive data volumes from IoT, AI analytics, and real-time operations, potentially rivaling cloud hyperscalers through low-latency access and control. This investigative article tests the hypothesis by examining performance, costs, scalability innovations, and real-world industrial applications as of 2026.

Hypothesis Overview

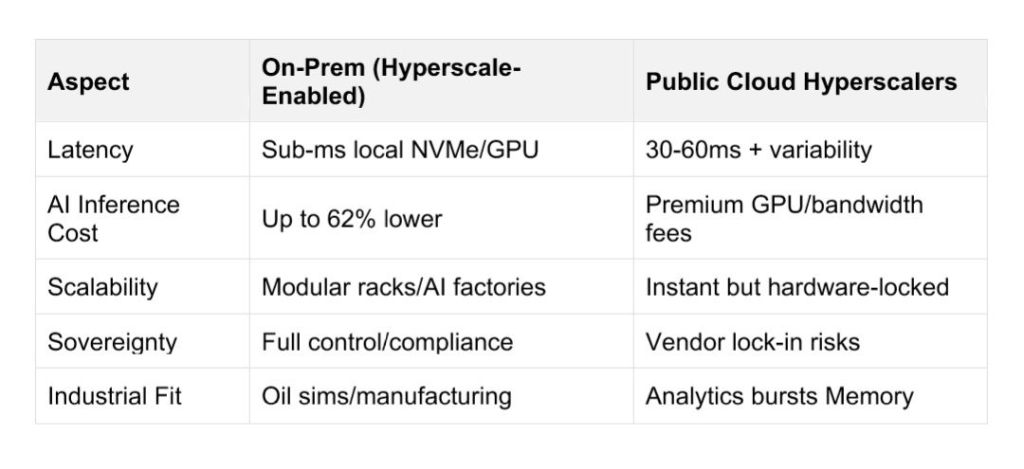

Industrial mass consumption generates petabytes daily from sensors, robotics, and simulations in sectors like oil & gas, manufacturing, and mining—far beyond consumer data loads. Traditional cloud hyperscalers (AWS, Azure, Google Cloud) provide elastic scaling but introduce latency (30-60ms round-trips) and data sovereignty risks unsuitable for mission-critical operations. On-prem setups, enhanced by “AI factories” and hyperscale-like architectures, hypothesize a superior alternative by keeping data local for sub-millisecond performance while enabling modular expansion.

Performance Edge

On-prem NVMe storage delivers consistent low-latency access critical for industrial automation, unlike variable cloud EBS volumes. Dedicated GPUs avoid cloud overhead, suiting AI-driven reservoir modeling or predictive maintenance without bandwidth throttling. Dell’s AI Factory, for instance, deploys GPU-dense racks at 127kW with direct-to-chip cooling for fault-tolerant hyperscale on-site, powering 100MW+ facilities.

Cost and Sovereignty Realities

Cloud costs escalate 50-80% for stable, high-volume workloads due to egress fees and GPU premiums, while on-prem yields 62% savings on AI inference per Enterprise Strategy Group data. Regulated industries favor on-prem for compliance, retaining proprietary seismic or production data without exposure. Hybrid extensions like AWS Outposts or Dell’s edge clusters blend benefits, treating on-prem as scalable “pods.”

Industrial Case Studies

Oil & gas leverages on-prem HPC for 3D/4D seismic imaging and chemical simulations, processing vast datasets faster than cloud hybrids. Dell’s 1,700 AI micro-factories at retailers like Lowe’s extend to manufacturing, enabling edge-to-factory hyperscaling without public cloud dependency. Mining firms use NVMe clusters for real-time ore analysis, dodging cloud data export bans in regions that implement such policy.

Scalability Challenges Addressed

Traditional on-prem scaled slowly via hardware installs, but 2026 software-defined storage (SDS) and NVIDIA-integrated platforms like Cloudian’s HyperScale enable rapid pod-like growth. Energy-efficient SSDs and liquid cooling support hyperscale densities (127kW/rack) sustainably, countering power grid queues. Hybrids with hyperscaler “on-prem cloud” (e.g., Google Distributed Cloud) provide burst capacity.

Verdict: Viable Cloud Alternative?

On-prem hyperscaling via AI factories proves effective for industrial mass consumption, excelling in latency, costs, and control where pure cloud falters—validating the hypothesis for sovereignty-focused sectors. Full replacement remains rare; 85% of AI shifts to hybrid/on-prem suggest strategic complementarity over outright substitution. Future trends favor modular on-prem as data sovereignty and AI inference costs intensify.